· 5 min read

My AI wrote garbage code. And that was exactly what I needed.

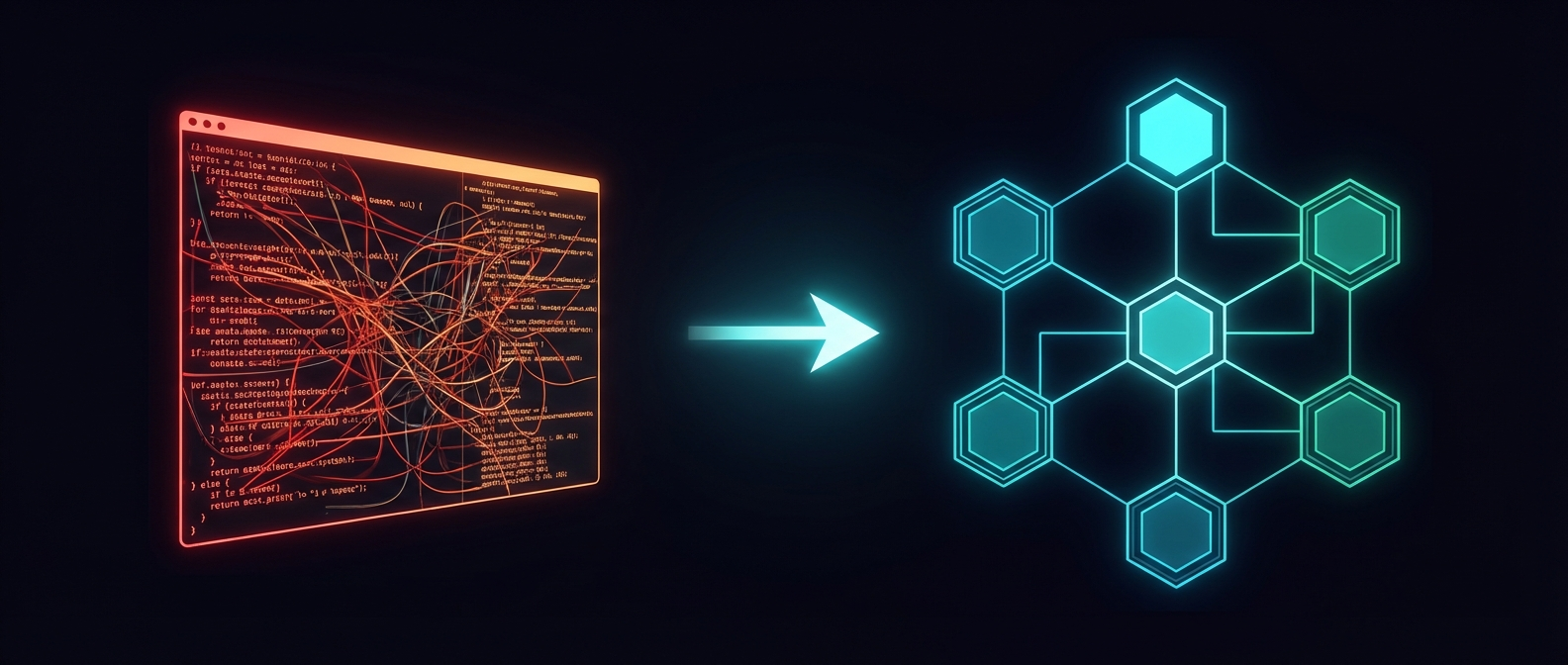

There is a widespread belief that when you use AI to code, you need to ask it to do things right from the start. Clean architecture, small files, quality tests. And if it doesn’t come out that way, either the tool is failing or you don’t know how to use it.

The reality is that this mindset can slow you down, especially in exploratory projects. And messy initial code is not necessarily a problem.

What I have confirmed across my last two projects is that the right moment to ask for clean code is the second phase, not the first.

This applies to full projects, but I see it just as clearly for large features within an existing project.

The TL;DR: the end of your work with code is not when it works. It is when the code is maintainable and scalable. And that does not come from a perfect first attempt, but from successive rounds of refactoring.

The beginning: a crashed server and a decision

Postiz, the tool I use to publish on social media, killed a 4GB RAM server. I couldn’t even SSH in to stop Docker. I literally lost access while it kept consuming resources.

It was interesting how AI helped me recover it. But I’ll save that for another post 😄

I had been tolerating its issues for a while: an unusable mobile UI, way too heavy for individual use, inconsistent API. But that day gave me the push I needed to decide to build my own tool.

The first version I had in mind was simple: a lightweight API wrapped with an MCP and a CLI, with a minimal UI just to review posts. Nothing interactive. Something that could run on a Raspberry Pi.

As I mentioned at the time, I started the project from my own Openclaw while the kids were playing at the park.

That night, back home, I tested it and it worked. Only X for now, which was the MVP I had set for myself.

And then I got carried away.

The moment the project complicates itself

I asked Pencil to design a UI for me. The result was so good that I ended up building a full interface, 100% responsive. What was going to be headless became an app with its own UI.

The problem: the API the interface needed started evolving much faster than the CLI and MCP. I kept adding endpoints for the web without worrying about whether the other two channels had parity. Technical debt accumulated silently.

And the code… the main file reached 7,000 lines. One of the test files exceeded 3,000.

Everything worked.

And it was a mess.

And nothing was wrong with that.

Phase 2: when you sit down as an engineer

This is where a lot of people stop. With the project working, with bad code, the kind that gets criticized so much in vibe coding discussions.

But this is exactly where the engineering phase begins.

The first thing was architecture. With everything already working in front of me, I could analyze what fit best. We ruled out classic layered architecture because it would have unnecessarily complicated the application domain. We went with hexagonal.

Before starting, I asked the agent to review the existing tests and add whatever was needed to make sure the refactor didn’t break the app. Current models are extremely good at refactoring, especially at this scale. But I didn’t want to take chances.

Once the app was restructured with the new architecture, we started splitting the large files. A soft limit of 500 lines was reasonable.

Then came the parity problem. The API had features the CLI and MCP didn’t have. We set up a validation in the tests: if a feature exists in the API, the tests fail if it’s not also available in the CLI and MCP. That way the agent knows exactly when it’s done.

I also did a few iterations to improve web accessibility, using both the LLM’s own knowledge and dedicated accessibility validation tools.

And finally the tests themselves. That deserves its own paragraph.

The problem with AI-generated tests

AI tends to write white-box tests: it checks that a specific function was called with specific parameters. These are tests that break the moment you refactor, even if the behavior hasn’t changed at all.

In general, they are useless except for giving you a false sense of coverage.

What I needed were black-box tests: you give it an input, you check the output. You don’t care how it’s implemented internally. Those tests survive refactors.

I talked to the agent and told it I preferred that style of tests, and that it should review whether there were any tests that simply validated that one function called another. There are a lot of those out there, and I suspect its training data makes it tend to write them. But they weren’t adding any value.

And yes, most of this work I did from Telegram. In spare moments I used for this instead of scrolling through social media.

What I want you to take away from this

The chaotic code from phase 1 was not a mistake. It was necessary to get as far as I did in so little time. Without that messy sprint, I might have gotten lost in the details early on and not had a working app today.

The mistake would be staying there. Or believing the agent is going to handle phase 2 on its own, without you making the engineering decisions.

AI is incredibly fast at building. But the technical direction is still yours.